In 2023 and 2024 combined, on-chain exploits drained more than four billion dollars from DeFi protocols, exchanges, and NFT platforms. And here is the part most people do not talk about openly: the majority of those attacks were not technically brilliant. They were simply fast.

Attackers moved within seconds. Many exploits wrapped up inside a single block. By the time any human analyst noticed something was off, funds were already traveling through mixers and jumping across chains. The gap between attack execution and human response was not measured in minutes. In several documented cases, it was just a handful of seconds.

This is precisely why traditional security models are breaking down in blockchain environments. Static audits review code before deployment. Perimeter defenses watch entry points. But neither approach accounts for what happens after a contract is live and functioning exactly as written, yet being exploited in ways no one anticipated during design.

The problem is architectural, not just technical. Blockchain infrastructure is inherently public, permissionless, and real-time. Anyone can read state data, anyone can submit transactions and anyone can compose protocols together in unintended ways. That openness is what makes blockchain powerful. It is also what makes it uniquely dangerous when monitoring does not exist.

Real-time threat monitoring for blockchain is the structural answer to this gap. Understanding how it works is no longer optional for teams running serious infrastructure.

What Real-Time Threat Monitoring for Blockchain Actually Means

Before jumping into architecture and detection logic, it is worth being precise about what real-time blockchain threat monitoring actually is, because the phrase gets used loosely and often incorrectly.

Real-time threat monitoring for blockchain means continuous, automated surveillance of on-chain activity. This includes transaction patterns, contract state changes, wallet behaviors, and event logs, all tracked with the goal of detecting anomalies the moment they occur rather than hours or days later.

This is fundamentally different from a security audit, which is a point-in-time code review done before deployment. It is also different from basic dashboards that notify teams when gas prices spike or a wallet balance drops. True real-time monitoring involves behavioral modeling, where a system builds a picture of normal activity and flags meaningful deviations from that picture as they happen.

Importantly, effective monitoring does not just detect threats. In mature implementations, it responds to them automatically. When anomaly thresholds are crossed, systems can trigger smart contract pause mechanisms, halt token transfers, or escalate incidents to security teams instantly. Detection without response capability is better than nothing, but it is not the complete picture.

The practical scope of real-time threat monitoring covers several layers: mempool observation before transactions confirm, event log parsing as blocks settle, state variable tracking across contracts, and cross-wallet behavioral analysis. Each layer catches different threat types, and together they create a surveillance architecture that genuinely matches the speed of modern attacks.

How On-Chain Attacks Actually Unfold

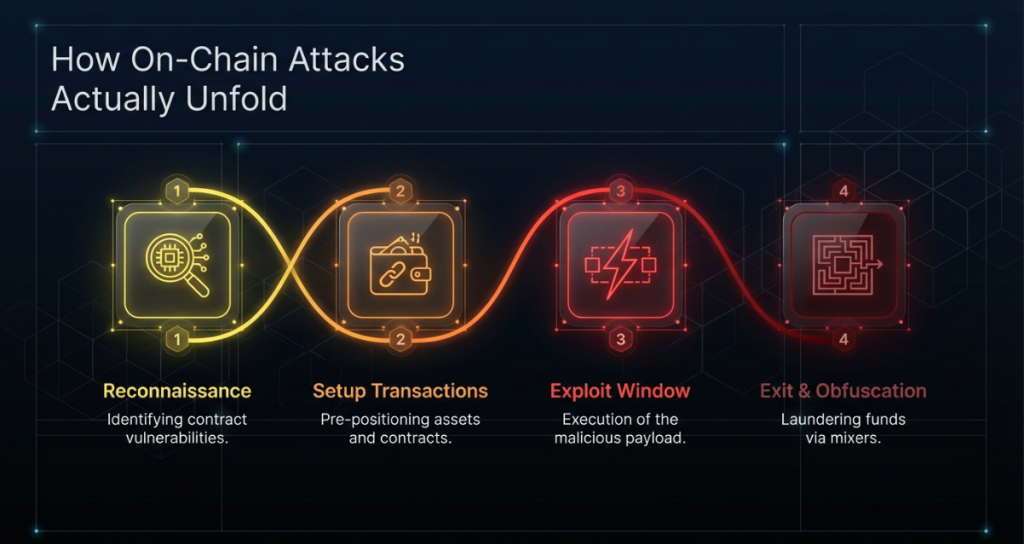

To fully appreciate why real-time monitoring matters so much, it helps to walk through how modern on-chain attacks are structured. Because, contrary to popular belief, most sophisticated attacks are not random. They follow predictable, observable patterns.

The Reconnaissance Phase

Most serious attackers do not strike blindly. Before executing, they study target protocols carefully, sometimes for days or even weeks. They analyze contract logic, map fund flows, test edge cases on testnets, and identify timing windows where liquidity is high and monitoring is likely minimal.

This phase happens entirely off-chain. Consequently, no on-chain monitoring system catches it. However, understanding that this phase exists is important because it means attackers arrive prepared, with specific contracts targeted and specific sequences planned.

The Setup Transactions

Right before most exploits, attackers execute several preparatory transactions. These might include acquiring large token positions, deploying helper contracts, or distributing funds across wallets to obscure the origin of capital. Individually, these setup transactions often look completely ordinary. Taken together as a sequence, however, they form a recognizable pre-attack signature that behavioral monitoring systems can flag.

The Exploit Window

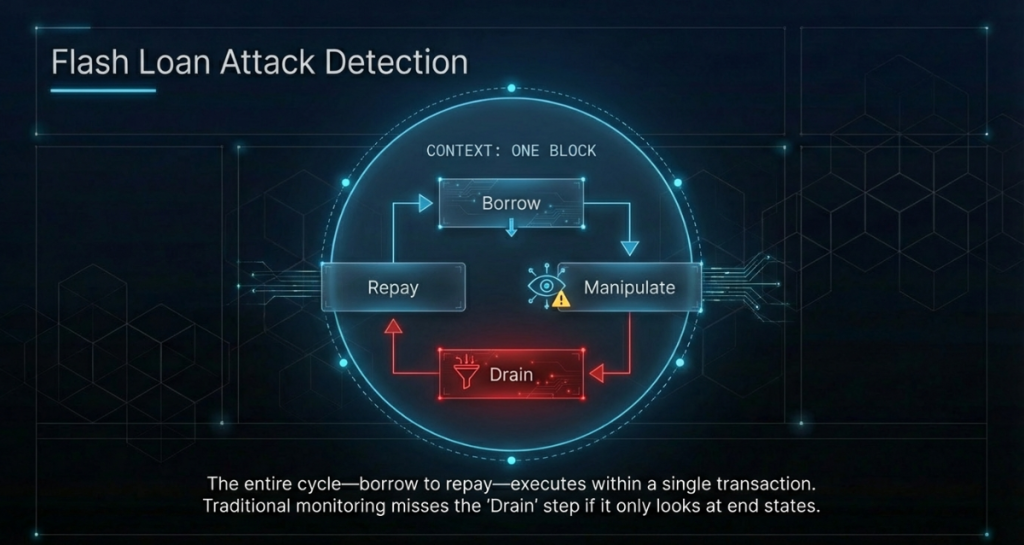

This is the critical phase, and it is where speed becomes everything. In flash loan attacks specifically, the entire sequence from borrowing to manipulating to repaying can happen within a single transaction block. Sometimes this plays out in just a few hundred milliseconds of wall-clock time.

The attacker never needs to hold any capital. They borrow, use, and return it all in one atomic operation while draining the protocol in between. Because everything moves so fast, post-incident analysis becomes the only tool available when monitoring is absent. And unfortunately, post-incident analysis does not recover funds. It only explains what happened.

The Exit and Obfuscation Layer

After the exploit, funds move through a structured sequence of hops. Bridge protocols carry assets across chains. Mixing services break transaction trails. Decentralized exchanges convert tokens to reduce traceability. Each additional hop increases time and complexity for any forensic investigation that follows. The further the funds travel from the original exploit, the harder recovery becomes.

Understanding this full lifecycle makes it clear that effective real-time monitoring must engage at the setup and exploit phases to create any real intervention opportunity.

The Core Architecture Behind Real-Time Blockchain Monitoring

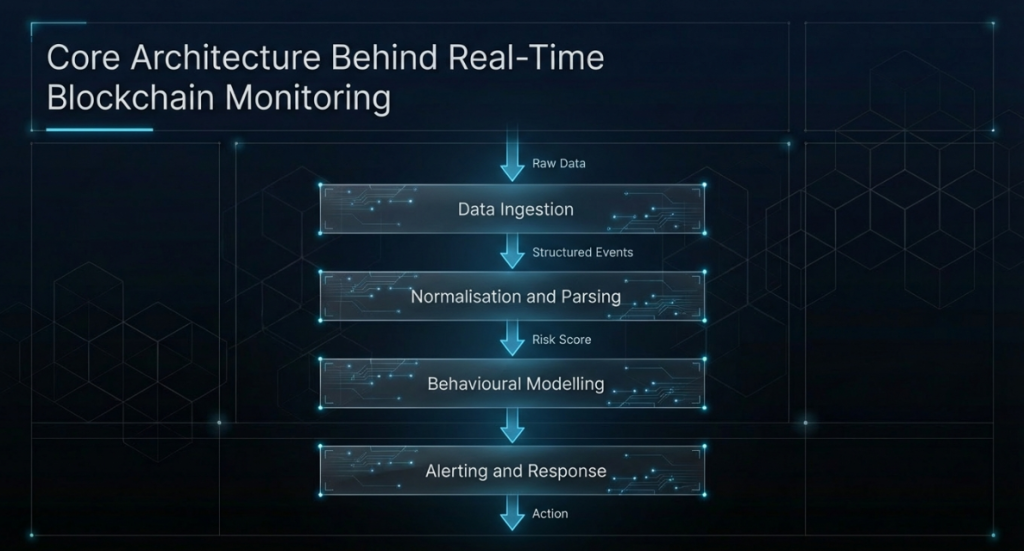

A production-grade real-time monitoring system is not a single tool. It is a layered architecture where each component handles a specific data stream and a specific threat class.

Data Ingestion Layer

At the foundation, the system pulls in raw blockchain data continuously. This includes pending transactions from the mempool, confirmed transactions as blocks are validated, smart contract event logs, and state variable snapshots taken at each block height.

For multi-chain environments, this layer also handles data normalization across chains with different consensus models and data structures. Speed here is non-negotiable. A monitoring system even ten seconds delayed in pulling mempool data has already missed the execution window for most flash loan attacks. High-frequency data pipelines and direct node connections are essential components at this layer.

Normalization and Parsing Layer

Raw blockchain data is messy and unstructured. ABI decoding, log parsing, and transaction input decoding must happen before any meaningful analysis is possible. This layer converts hex-encoded data into structured, queryable records.

Additionally, it maps contract addresses to known entities, links wallet clusters identified through prior analysis, and tags each transaction with relevant metadata such as protocol name, function called, and value transferred. This structured output is what the analytical layers above consume.

Behavioral Modeling Layer

This is where the real intelligence lives. Once data is structured, the system builds behavioral profiles for contracts, wallets, and protocol-level activity. A liquidity pool has a characteristic transaction volume range. A governance contract has a typical function call frequency. A lending protocol has an expected utilization rate band. All of these form measurable baselines.

Anomaly detection then measures live activity against these baselines continuously. Deviations above configurable thresholds generate risk signals. The sophistication of this layer determines how many false positives the system generates and how quickly it catches genuine threats. Getting this layer right is where most of the engineering work lives.

Alerting and Response Layer

When risk signals cross defined thresholds, the system escalates appropriately. Lower-severity signals log to a monitoring dashboard and send a low-priority notification. Medium-severity signals escalate to the on-call security team through Slack, PagerDuty, or custom webhooks. High-severity signals trigger immediate multi-channel notification and, where automated response is configured, initiate containment actions simultaneously with human notification.

This last point is critical. Speed of response directly determines how much damage is limited. Every additional block that settles after an exploit begins is another window for funds to move further from recovery.

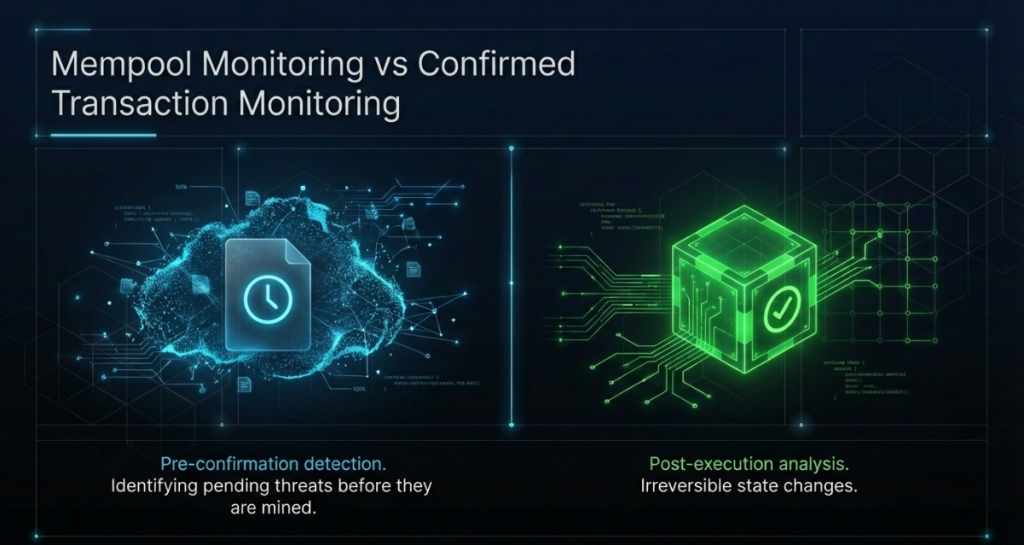

Mempool Monitoring vs Confirmed Transaction Monitoring

One of the most important technical distinctions in blockchain threat monitoring is the difference between watching the mempool and watching confirmed transactions. Both are necessary, but they serve different purposes and have different technical requirements.

What Mempool Monitoring Catches

The mempool is where transactions wait before validators include them in a block. For most public blockchains, this data is observable by anyone running a full node. Mempool monitoring lets a system see transactions before they execute, which creates a brief but meaningful window for early warning.

For example, a flash loan setup transaction entering the mempool might show specific characteristics: an unusually large borrow request directed at a specific pool, immediately followed by a contract interaction with a protocol that has a known price oracle dependency. A monitoring system watching mempool activity can flag this sequence and alert teams before the block confirms.

Additionally, mempool monitoring is the only layer capable of detecting front-running and sandwich attacks, because these attack types require observing target transactions before they settle and then inserting attacker transactions around them.

What Confirmed Transaction Monitoring Catches

Confirmed transaction monitoring covers the majority of threat detection use cases. After a block settles, complete transaction data becomes available, including internal calls, event emissions, and state changes. This is where behavioral anomaly detection works most effectively, because full execution traces are visible for analysis.

Confirmed transaction monitoring also supports compliance use cases particularly well. Tracking fund flows for AML purposes, generating audit trails for regulatory reporting, and building forensic records of suspicious activity all require confirmed, finalized data rather than pending mempool data.

Why Both Layers Are Needed Together

Production monitoring architectures use both layers in combination. Mempool monitoring provides the earliest possible warning signal. Confirmed transaction monitoring provides the most analytically complete picture. Teams relying only on confirmed transactions are perpetually one block behind. Teams relying only on mempool data miss the execution context that confirmed data provides. Together, the two layers create coverage that neither achieves independently.

How Behavioral Anomaly Detection Works on Chain

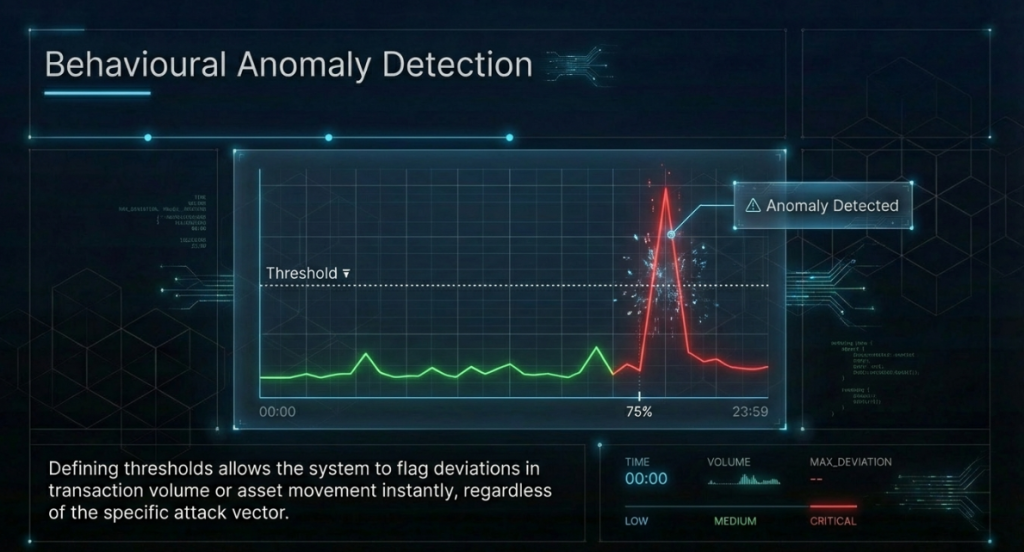

Behavioral anomaly detection is the intellectual core of real-time blockchain threat monitoring. It is worth explaining how it actually works, because most descriptions of this concept stay frustratingly vague.

Establishing a Behavioral Baseline

Every contract, wallet, and protocol has a characteristic behavioral signature under normal conditions. A DEX liquidity pool might process between five hundred and two thousand transactions per hour during typical market activity. A governance contract might receive proposals once every few days. A lending protocol’s utilization rate fluctuates within a known band across different market cycles.

A monitoring system builds these baselines by observing historical activity over a calibration period. Depending on how mature the protocol is and how much historical data is available, this calibration takes anywhere from a few days to several weeks to produce statistically reliable profiles.

Defining Anomaly Thresholds

Once baselines exist, the system needs rules defining when deviation becomes suspicious. Some thresholds are straightforward. If a contract’s transaction volume spikes to forty times its hourly average within two minutes, that is statistically significant by any reasonable measure.

Other thresholds are more nuanced. A sudden change in the ratio of a specific function call relative to total transactions, combined with an uptick in failed transactions from new wallets, might indicate someone probing a contract for vulnerabilities without any single signal being individually alarming.

This combination logic is what separates sophisticated monitoring from simple alerting. Real anomaly detection considers multiple signals together, weights them by historical significance, and produces a composite risk score rather than a binary alarm. That composite approach dramatically reduces false positives while improving sensitivity to genuine threats.

Machine Learning in Anomaly Detection

Modern monitoring platforms layer machine learning models on top of rule-based detection. ML models are particularly effective at identifying subtle, multi-step attack patterns that do not match any predefined rule but share structural similarities with past exploits the model has been trained on.

For example, a well-trained model might recognize the behavioral sequence that preceded a major lending protocol exploit even when encountering a contract it has never analyzed before, because the interaction pattern of probing and draining a lending protocol follows recognizable structures across different incidents. This is how monitoring catches novel attack variants, not just known ones.

Flash Loan Attack Detection: A Technical Breakdown

Flash loans represent one of the most distinctive attack vectors in decentralized finance, and they are also one of the clearest examples of why real-time monitoring requires speed above all other properties.

What Makes Flash Loans Dangerous

A flash loan allows a borrower to take any amount of an asset with zero collateral, as long as the full amount is repaid within the same transaction. This creates a situation where an attacker can temporarily control enormous sums of capital, easily enough to move markets, drain pools, or manipulate price oracles, without needing to own any of that capital beforehand.

The atomic nature of flash loans means the entire attack happens in one transaction. If repayment fails, the whole transaction reverts and nothing happens on-chain. If it succeeds, the attacker profits and the transaction looks, at a surface level, like a completed flash loan that was repaid normally. This is what makes them so challenging to prevent through static analysis alone.

Detection Signals for Flash Loan Exploits

Despite their speed, flash loan attacks leave detectable signatures that real-time monitoring systems can identify. First, transaction complexity is unusually high. A normal flash loan involves three to five contract interactions at most. A flash loan exploit typically involves ten to thirty or more, because the attacker needs to borrow, swap, manipulate, drain, and repay across multiple protocols within one transaction.

Second, gas usage is characteristically elevated. More complex transactions consume significantly more gas. Flash loan exploits regularly consume ten to fifty times the gas of a standard DeFi interaction, which makes them statistically distinct from normal activity.

Third, price impact signals are distinctive. When an attacker uses a flash loan to manipulate a price oracle, they typically need to move market prices by a meaningful percentage. A monitoring system watching decentralized exchange reserves can detect abnormal price impact events in real time and correlate them with simultaneous activity on lending protocols that use those price feeds.

Fourth, and perhaps most visibly, profit extraction is transparent on-chain. After a successful flash loan exploit, funds leave the protocol and enter the attacker’s wallet in a single outflow. The combination of large outflow immediately following a complex interaction pattern is a composite signal that is difficult to mistake for anything other than an exploit.

MEV Attack Monitoring and What Makes It Difficult

Maximal Extractable Value attacks represent one of the technically most sophisticated threat classes in blockchain environments. Monitoring for them requires understanding the validator layer in addition to user-level transaction activity, which is why many monitoring systems handle MEV poorly.

Understanding MEV in Practice

MEV refers to value that can be extracted through the ordering, inclusion, or exclusion of transactions within a block. Validators and searchers who can influence block construction can insert their own transactions before or after a target transaction to capture profit at the target’s expense.

The most common forms are front-running, where an attacker sees a profitable pending transaction and submits the same trade with higher gas to execute first, and sandwich attacks, where an attacker places one transaction before and one after a target swap to profit from the price movement the target transaction creates.

Why MEV Monitoring Is Technically Hard

MEV activity is genuinely hard to distinguish from legitimate trading at the individual transaction level. A front-running transaction looks like a completely normal swap. The malicious intent only becomes visible when you analyze the ordering relationship between the attacker’s transaction and the victim’s transaction within the same block.

Effective MEV monitoring therefore requires block-level analysis rather than individual transaction analysis. The system must reconstruct transaction ordering within each block, identify pairs or groups of transactions with suspicious positional relationships, and measure the profit differential that ordering creates. This requires considerably more computational depth than standard transaction monitoring.

Despite that difficulty, MEV monitoring is increasingly important as regulators begin scrutinizing whether protocols facilitate or simply ignore manipulative trading practices that harm retail users.

Wallet Behavior Profiling and Cluster Intelligence

Individual wallet addresses are only one dimension of on-chain identity. Understanding how wallets relate to each other, and how coordinated behavior across groups of wallets signals organized malicious activity, is what wallet behavior profiling adds to the monitoring picture.

How Wallet Clustering Works

Wallet clustering uses a combination of on-chain transaction patterns and heuristic analysis to group wallet addresses likely controlled by the same entity. The most basic heuristic is common input ownership: if two addresses frequently appear as inputs to the same transaction, they are likely controlled by the same key infrastructure.

More advanced clustering uses behavioral fingerprinting. Wallets that consistently interact with the same contracts in the same sequence, or that show coordinated fund movements within narrow time windows, often belong to the same operational network even when they share no direct on-chain connection. Timing correlation, interaction overlap, and fund flow patterns together create a profile that reveals entity relationships invisible at the individual wallet level.

What Cluster Analysis Catches

Cluster intelligence is particularly valuable for detecting Sybil attacks, wash trading operations, coordinated governance manipulation, and multi-wallet exploit setups. When a cluster of wallets begins collectively accumulating positions in a specific protocol before a suspicious event, that collective pattern is a meaningful early warning signal that no single-wallet analysis would catch on its own.

Furthermore, cluster analysis is essential for asset recovery after an exploit. When attackers try to obscure stolen funds by distributing them across dozens of addresses, cluster intelligence reconstructs the relationships between those wallets and maintains traceability through even complex distribution patterns.

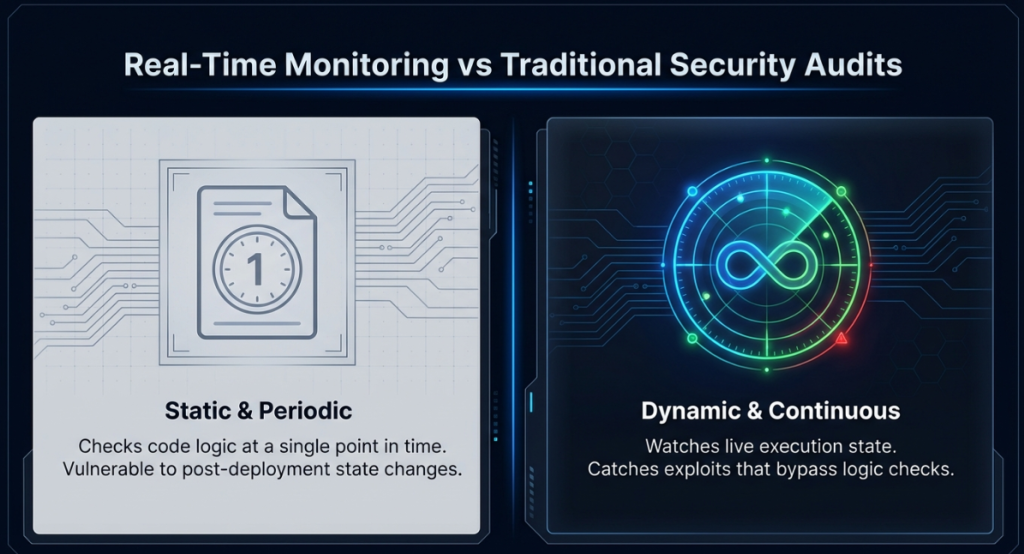

Real-Time Monitoring vs Traditional Security Audits

Understanding the relationship between real-time monitoring and traditional security auditing matters for teams building complete security programs. These two approaches are complementary rather than competing, and it is important to understand exactly why both are needed.

| Dimension | Traditional Security Audit | Real-Time Threat Monitoring |

|---|---|---|

| When it operates | Pre-deployment, one time | Post-deployment, continuously |

| What it analyzes | Source code and logic | Live transaction and behavioral data |

| Detection method | Manual and automated code review | Behavioral anomaly detection |

| Response speed | Days to weeks for a report | Seconds to minutes for alerts |

| Coverage scope | Known vulnerability patterns | Known and novel attack patterns |

| Ongoing protection | None after delivery | Continuous, indefinite |

| Regulatory documentation | Audit report | Incident logs and compliance data |

| Exploit prevention | Reduces code-level risk | Detects and responds to runtime threats |

The practical reality is that even a thoroughly audited contract can be exploited when market conditions create economic attack vectors that auditors could not model in advance. Price oracle manipulation, governance attacks, and economic design flaws often only emerge under specific live market conditions that are impossible to simulate fully before deployment.

This is precisely why both layers are necessary. An audit reduces the probability of a code-level vulnerability making it to production. Monitoring catches what the audit missed, or what became exploitable only after deployment into a live, changing market environment. Teams running audits without monitoring are protected at deployment and increasingly exposed from that point forward. Teams running monitoring without audits are catching runtime threats while potentially shipping preventable code-level vulnerabilities. The complete program uses both.

Event-Driven Alert Systems: How They Actually Work

Event-driven alert systems are the operational output layer of real-time blockchain monitoring. They are the mechanism through which analysis becomes action, and their design quality has a major impact on how useful monitoring actually is day to day.

What Triggers an Event-Driven Alert

Alerts can be triggered by several different signal types. Rule-based triggers fire when specific conditions are met, such as a withdrawal exceeding a defined threshold, a contract being called by an address that has never interacted with it before, or a function being invoked in a sequence that matches a known exploit pattern.

Anomaly-based triggers, by contrast, fire when behavioral risk scores cross configured thresholds. These are more flexible than rule-based triggers because they do not require advance knowledge of the specific attack pattern. Instead, they respond to statistical deviation from expected behavior, which means they can catch novel attacks that no predefined rule would recognize.

Composite triggers combine multiple signals and are generally the most powerful. A single large withdrawal might not trigger an alert on its own. But that same withdrawal combined with an unusual preceding sequence of function calls, occurring immediately after a detected price oracle deviation, creates a composite signal strong enough to warrant immediate escalation.

Alert Routing and Escalation Logic

Not all alerts warrant the same response, and effective systems route them differently based on assessed severity. Low-severity signals log to a monitoring dashboard and send a low-priority notification. Medium-severity signals escalate to the on-call security team through Slack or PagerDuty with a defined response window. High-severity signals trigger immediate multi-channel notification and, where automated response is configured, initiate containment actions simultaneously with human notification.

The quality of this escalation logic ultimately determines whether security teams trust their monitoring system or treat it as an unreliable alarm that cries wolf. Too many false positives and teams start ignoring alerts. Too few and the system is missing real threats. Calibrating thresholds based on ongoing operational feedback is a continuous process rather than a one-time configuration task.

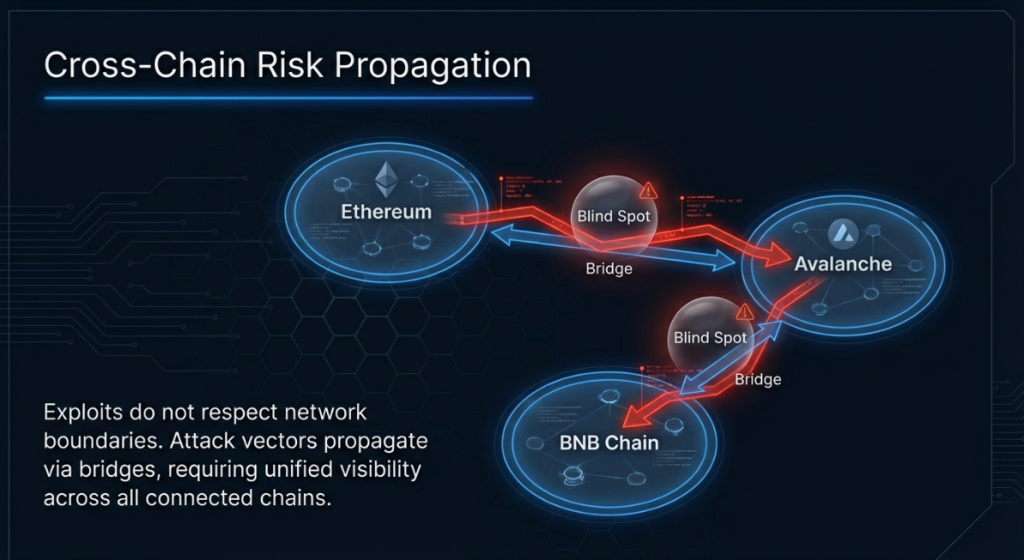

Cross-Chain Risk Propagation and Why It Is Overlooked

Most blockchain security programs monitor one chain. As multi-chain and cross-chain architectures have become standard across DeFi, exchanges, and institutional infrastructure, this single-chain approach represents a growing and often underappreciated gap.

How Attacks Travel Across Chains

Cross-chain risk propagation happens in several distinct ways. First, bridge protocols themselves are high-value targets. When a bridge is exploited, funds from one chain appear on another chain in ways that look like ordinary bridge transactions to monitoring systems watching each chain independently.

Second, attackers routinely use bridges as part of their post-exploit laundering strategy. Moving funds from Ethereum to Avalanche to BNB Chain makes forensic tracing significantly harder, especially when each chain is monitored in isolation with no shared intelligence layer connecting the observations.

Third, and less obviously, economic conditions on one chain can directly create attack opportunities on another. A price oracle on Ethereum that is manipulated through a flash loan can affect asset prices referenced by protocols on other chains that rely on cross-chain price feeds. The attack originates on one chain and the damage lands on another.

What Cross-Chain Monitoring Requires

Effective cross-chain monitoring requires a unified data layer that ingests activity from multiple chains and correlates it within a single analytical framework. When a wallet on Ethereum sends funds to a bridge and a new wallet on Polygon receives an equivalent amount shortly after, the monitoring system needs to recognize that as one entity moving funds, not two unrelated events on two separate chains.

This unified view is technically complex but operationally essential for teams running infrastructure across more than one chain. Without it, sophisticated attackers simply shift their most sensitive activity to whichever chain has the weakest monitoring coverage at any given time.

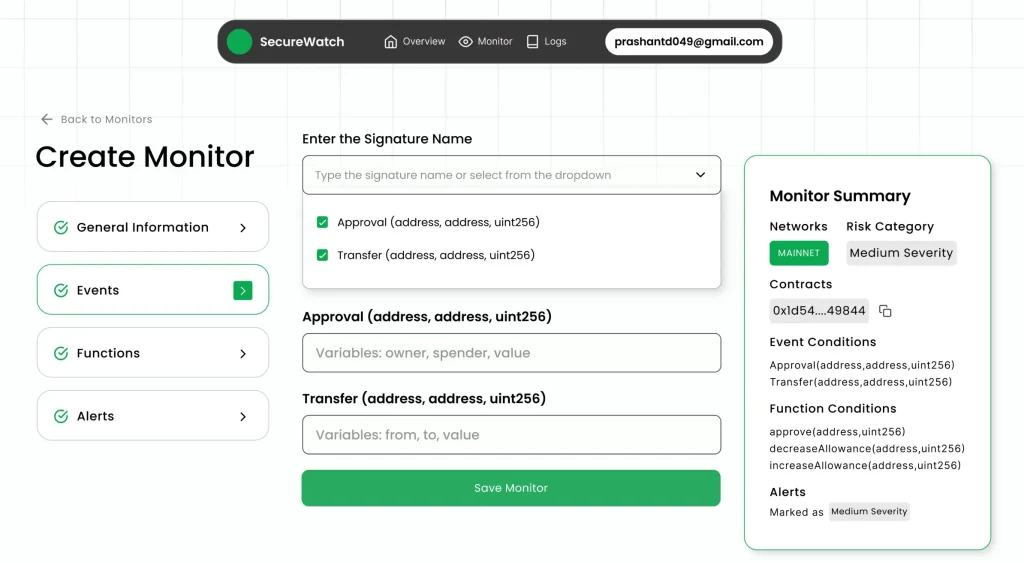

How SecureWatch Operationalizes This Framework

Translating the architecture described throughout this guide into a working, production-grade system requires substantial engineering depth and sustained operational experience. This is precisely where purpose-built platforms provide meaningful advantages over teams attempting to build monitoring capabilities from scratch internally.

SecureWatch, developed by SecureDApp, operationalizes the complete framework covered in this guide. It applies AI-driven behavioral modeling and transaction pattern analysis to detect the threat classes described here: unauthorized contract interactions, abnormal transaction flow sequencing, suspicious wallet clustering, contract parameter tampering, privilege escalation attempts, and exploit signatures targeting live deployments.

What makes SecureWatch particularly significant among blockchain security infrastructure is its automated containment capability. When anomaly thresholds exceed predefined behavioral baselines, SecureWatch can trigger smart contract pause mechanisms directly, stopping further state manipulation before additional funds are drained. This capability transforms the platform from a passive observer into an active defense layer, which is a meaningful distinction when exploits are measured in blocks rather than minutes.

SecureWatch holds a Government of India patent for its post-deployment blockchain security methodology, reflecting genuine technical originality in its approach to runtime defense. It integrates directly into DevSecOps workflows and operates continuously across supported chains, meaning security teams get persistent coverage without manually managing monitoring tasks.

For enterprises seeking both preventive and reactive security coverage, SecureDApp’s broader product stack provides full lifecycle protection. Solidity Shield handles pre-deployment audit and vulnerability detection through AI-powered smart contract analysis. SecureWatch handles post-deployment continuous monitoring. SecureTrace handles forensic investigation when incidents do occur. Together, these layers cover the complete security lifecycle from code review to live runtime defense to post-incident investigation and documentation.

Enterprise Implications and Regulatory Alignment

As blockchain infrastructure moves into regulated financial environments, real-time threat monitoring is transitioning from a security best practice to something closer to a compliance requirement. Understanding the regulatory dimension is increasingly important for institutional teams.

AML and Transaction Monitoring Requirements

Financial regulators in multiple jurisdictions now expect that entities handling digital assets maintain transaction monitoring capabilities that can identify suspicious activity and generate reports for regulatory review. The specific thresholds and reporting formats vary by jurisdiction, but the underlying expectation, continuous monitoring with structured documentation, is consistent across most major regulatory frameworks.

Real-time blockchain threat monitoring platforms generate exactly the kind of timestamped, event-specific logs that compliance teams need to demonstrate adherence to these requirements. Manual review processes simply cannot produce the same level of consistency or coverage depth at any reasonable operational scale.

Incident Response and Risk Governance

Enterprise risk governance frameworks increasingly require documented incident response plans for cyber events, including blockchain-specific incidents. Real-time monitoring is a prerequisite for any meaningful incident response capability, because you cannot respond effectively to an incident you do not know is happening until it is already over.

Moreover, insurers offering cyber liability coverage for digital asset operations are beginning to ask specifically whether policyholders have continuous monitoring in place. The presence or absence of real-time monitoring is becoming a factor in both coverage eligibility and premium pricing, adding a direct financial dimension to the monitoring decision.

Cost Reduction Through Early Detection

The financial case for real-time monitoring is straightforward when you consider the cost differential between early detection and post-exploit recovery. When monitoring catches an attack in progress and automated containment reduces the exploit window from minutes to seconds or blocks, the potential loss is dramatically smaller than in a scenario where the attack runs to completion before anyone responds.

Beyond direct exploit losses, post-incident costs include forensic investigation fees, legal costs, regulatory reporting obligations, reputation damage, and in some cases user compensation. Early detection reduces all of these downstream costs simultaneously, making the return on investment calculation relatively clear for any team that has honestly priced the full cost of an incident.

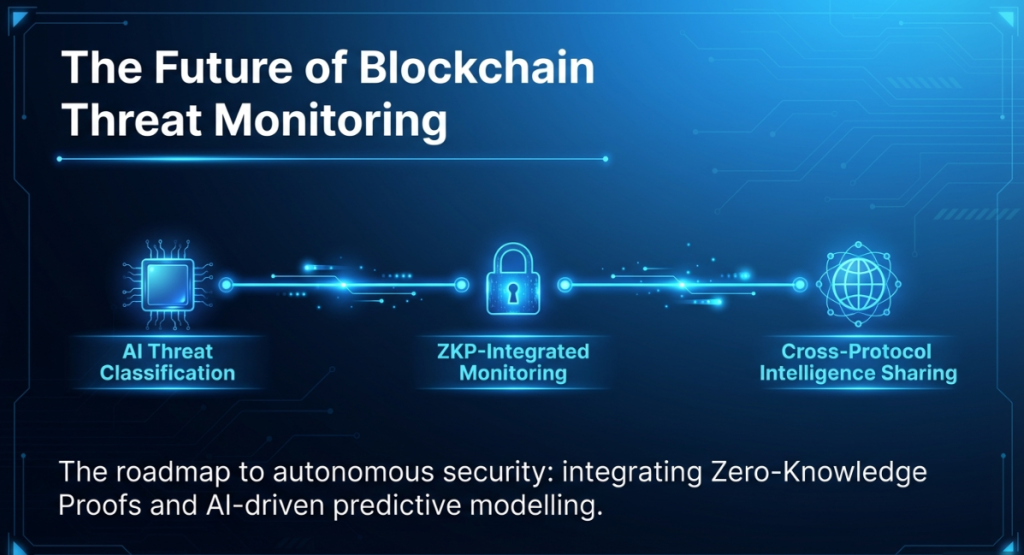

The Future of Blockchain Threat Monitoring

The threat landscape is not static, and neither is monitoring technology. Several trends are worth tracking for teams thinking about where blockchain security is heading over the next two to three years.

AI-Driven Threat Classification

Current anomaly detection systems flag deviations from baseline but still depend on human judgment to classify whether a flagged event is a genuine threat or a false positive. The next generation of monitoring platforms will close this gap using AI models trained on labeled historical attack data, enabling automated threat classification with enough confidence to trigger automated responses without requiring human review for every alert.

This shift will significantly reduce the operational burden on security teams and enable faster response times, particularly during off-hours when human coverage is limited. As training datasets grow richer with more documented incidents, classification accuracy will continue improving year over year.

Zero-Knowledge Proof Integration for Privacy-Preserving Monitoring

As privacy-enhancing technologies become more integrated into blockchain infrastructure, monitoring systems will need to adapt to environments where transaction details are partially or fully obfuscated. Zero-knowledge proofs allow parties to prove properties about transactions without revealing the underlying data. Future monitoring systems will need to work within ZKP-enabled infrastructures, using proof verification and metadata analysis to detect suspicious patterns even when full transaction data is not visible.

This is a significant technical challenge that the security research community is actively engaged with, and it will likely produce new monitoring methodologies that do not yet exist in commercial form.

Regulatory Technology Convergence

The gap between blockchain security platforms and regulatory compliance platforms is narrowing steadily. Regulators want real-time visibility into digital asset activity. Security platforms already provide that visibility. The natural convergence is platforms that serve both security and compliance functions simultaneously, generating monitoring intelligence that can be shared directly with regulators in approved formats on a continuous basis.

This convergence will make real-time monitoring a standard infrastructure component for any enterprise operating in regulated digital asset environments, similar to how SIEM systems are now standard in traditional financial services security architectures.

Cross-Protocol Threat Intelligence Sharing

Currently, most blockchain monitoring happens in isolation. Each protocol monitors its own activity without access to signals from other protocols. But attacks frequently cross protocol boundaries, using one protocol as a stepping stone to exploit another.

The emerging model is collaborative threat intelligence sharing, where protocols and monitoring platforms contribute anonymized threat signal data to a shared intelligence layer. When an attack pattern is detected targeting one protocol, that signature becomes immediately available to all participants in the intelligence network, shortening detection windows across the entire ecosystem. This kind of collective defense posture represents a meaningful evolution beyond what any individual monitoring deployment can achieve on its own.

FAQ for real time threat monitoring for blockchain

Real-time threat monitoring for blockchain is continuous, automated surveillance of on-chain activity, including transactions, contract events, and wallet behaviors, designed to detect and respond to threats as they occur rather than hours or days after the fact. It combines behavioral modeling, anomaly detection, and automated response capabilities into a unified security layer.

A smart contract audit is a pre-deployment code review that identifies vulnerabilities in contract logic. Real-time threat monitoring operates after deployment, watching live activity and detecting threats that emerge from how contracts are used in actual market conditions, including attack vectors that audits cannot predict. Both are necessary and they serve complementary rather than overlapping functions.

Real-time monitoring can detect the characteristic signals of a flash loan exploit in progress, including abnormal transaction complexity, elevated gas usage, and price oracle deviation, and trigger automated responses such as contract pausing to limit damage. In many cases, this significantly reduces exploit losses even when it cannot prevent the initial transaction from entering the mempool. The faster the response mechanism, the smaller the exploit window.

Setup time varies by platform and integration complexity. Cloud-based platforms with API-driven onboarding can be operational within days. More complex enterprise deployments with custom integrations into existing security infrastructure may take several weeks. The behavioral baseline calibration period, typically two to four weeks of observed activity, is the primary factor affecting how quickly the system reaches optimal detection accuracy.